A/B Testing for E-commerce: Optimize Your Store for Conversions

The “Why” Behind A/B Testing for E-commerce: Unlocking Growth and Understanding

At its core, A/B testing is about understanding your customers and responding to their preferences with data, not assumptions. For e-commerce businesses, the stakes are high. Every visitor represents a potential sale, and every friction point can lead to a lost opportunity. Without A/B testing, even well-intentioned changes can inadvertently harm your conversion rates, leaving you guessing about their impact. With it, you gain a powerful tool for continuous improvement and a deeper understanding of what drives your audience.

Driving Data-Driven Decisions

One of the primary benefits of A/B testing for e-commerce is its ability to replace intuition with concrete data. Instead of debating whether a green or red “Add to Cart” button will perform better, you can test it. The results will tell you unequivocally which variation leads to more conversions. This scientific approach ensures that your optimization efforts are backed by evidence, reducing risk and maximizing the likelihood of positive outcomes.

Boosting Conversion Rates and Revenue

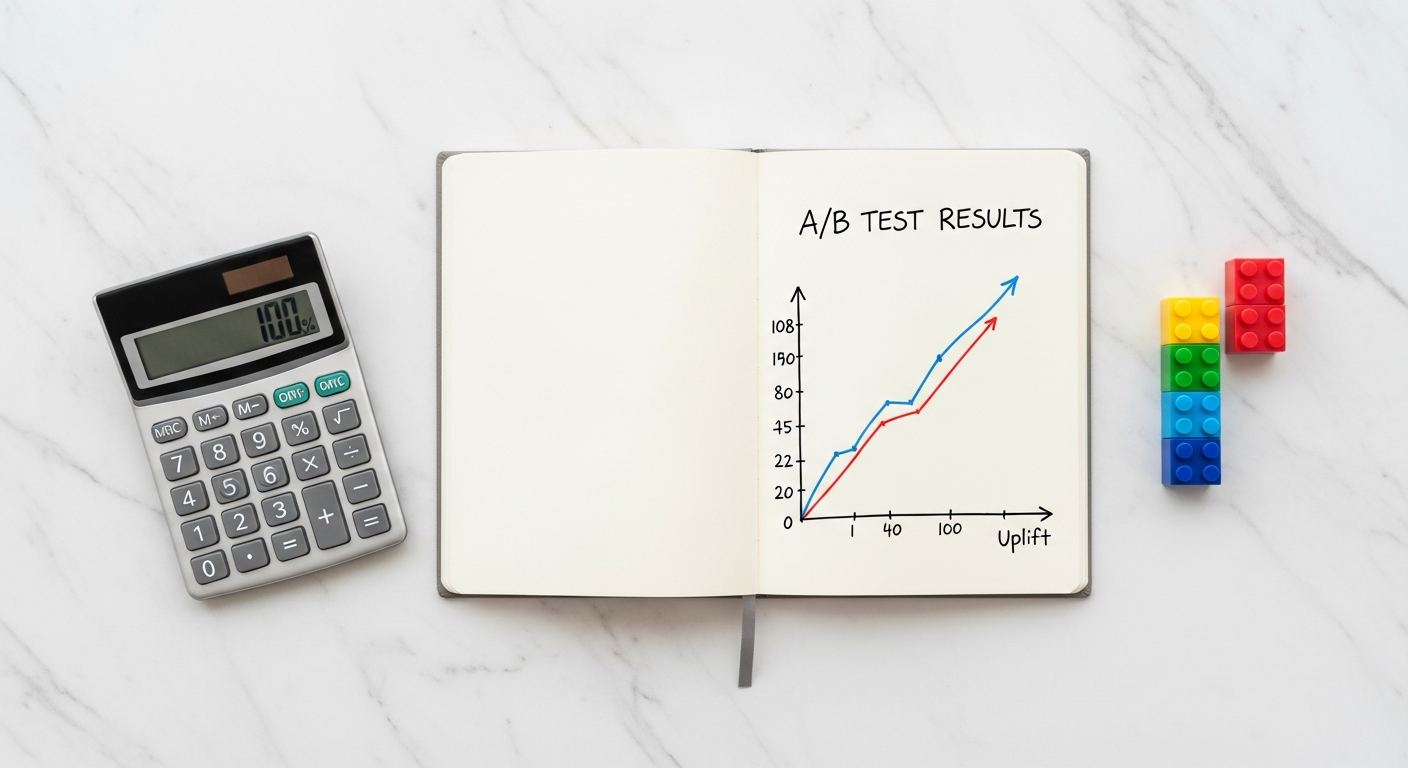

The most direct impact of effective A/B testing is a measurable increase in conversion rates. Even a marginal improvement can have a dramatic effect on your revenue. Consider an e-commerce store with 100,000 monthly visitors and a 1% conversion rate, generating 1,000 sales. If A/B testing helps increase that conversion rate to just 1.2%, you’re suddenly looking at 1,200 sales – a 20% increase in revenue for the same amount of traffic, without any additional marketing spend. Over time, these small, iterative improvements compound, leading to significant growth.

Enhancing User Experience (UX)

A/B testing isn’t just about selling more; it’s also about creating a better experience for your customers. By testing different layouts, navigation structures, and content presentations, you can identify what makes your site easier to use, more engaging, and ultimately, more enjoyable. A positive user experience leads to higher customer satisfaction, reduced bounce rates, increased time on site, and improved brand loyalty.

Reducing Risk and Saving Resources

Launching major redesigns or new features without validation can be incredibly risky and expensive. A/B testing allows you to test significant changes on a small segment of your audience before rolling them out widely. If a new design performs poorly, you’ve limited its negative impact. If it performs exceptionally, you can deploy it with confidence, knowing it has been validated by real user behavior.

Actionable Tip: Start Small, Think Big

Don’t wait for a major redesign to start A/B testing. Begin with small, impactful elements like CTA button text or product image sizes. Document your hypotheses and results, and gradually build a culture of continuous optimization within your team. The cumulative effect of these small wins will be monumental.

What to A/B Test on Your E-commerce Store: High-Impact Areas

Virtually any element of your e-commerce store can be subjected to A/B testing. The key is to identify areas with the highest potential impact on your conversion goals. By focusing your efforts on these critical touchpoints, you can achieve significant improvements in user experience and sales performance.

1. Product Pages: The Conversion Battlefield

Product pages are where buying decisions are made, making them prime candidates for A/B testing.

- Product Images & Videos: Test different angles, lifestyle shots vs. white background, image carousels vs. single large image, and the presence or absence of product videos. Does a 360-degree view increase confidence?

- Product Descriptions: Experiment with length, tone (benefit-driven vs. feature-heavy), formatting (bullet points vs. paragraphs), and placement.

- Call-to-Action (CTA) Buttons: This is a classic. Test text (“Add to Cart,” “Buy Now,” “Get Yours”), color, size, shape, and placement. Does a more prominent CTA reduce friction?

- Pricing Display & Discounts: How you present prices can influence perception. Test original price strikethrough, discount percentage vs. absolute value, and urgency messaging (“Limited Stock!”).

- Social Proof: The placement, prominence, and filtering options for customer reviews, star ratings, and testimonials. Do product videos featuring customer testimonials perform better?

- Trust Badges: Security seals, payment method logos, and satisfaction guarantees can all be tested for their impact on perceived trustworthiness.

2. Homepage & Category Pages: First Impressions Matter

These pages are often the entry point for new visitors and critical for guiding them deeper into your site.

- Hero Banners & Promotions: Test different images, headlines, CTA text, and overall messaging. Does focusing on a specific product category outperform a general promotion?

- Navigation & Menu Structure: Experiment with main menu categories, sub-menu depth, and sticky navigation. Is a simpler menu more effective?

- Featured Products/Collections: Which products should be highlighted? Test dynamic recommendations vs. hand-picked selections.

- Search Bar: Prominence, placement, and auto-suggest features. Does a larger, more visible search bar encourage exploration?

3. The Checkout Flow: Minimizing Abandonment

The checkout process is notorious for abandonment. Optimizing it can yield significant conversion gains.

- Number of Steps: One-page vs. multi-step checkout. Does breaking it down into smaller steps reduce overwhelm?

- Form Fields: Which fields are essential? Can any be removed or made optional? Test auto-fill features.

- Progress Indicators: Does showing users where they are in the checkout process reduce anxiety?

- Guest Checkout Options: Is forcing account creation a barrier? Test the impact of a prominent guest checkout option.

- Shipping Options & Costs: Transparency regarding shipping costs and delivery times. Free shipping thresholds.

- Payment Methods: The arrangement and visibility of payment options. Adding popular local payment gateways.

4. Site-Wide Elements: Consistent Optimization

Don’t overlook elements that appear across your entire site.

- Pop-ups & Overlays: Timing, design, messaging for newsletter sign-ups or exit-intent offers.

- Error Messages: How clear and helpful are your error messages? Can they guide users back on track?

- Mobile Responsiveness: Test different mobile-specific layouts and interactions.

Actionable Tip: Prioritize Based on Impact and Effort

When deciding what to A/B test, consider the potential impact a change could have (e.g., checkout flow vs. a minor typo) and the effort required to implement it. Start with high-impact, relatively easy-to-implement changes to build momentum and demonstrate value.

How to Conduct an Effective A/B Test for E-commerce: A Step-by-Step Guide

Executing a successful A/B test requires a systematic approach. Skipping steps or making assumptions can lead to misleading results and wasted effort. Follow this guide to ensure your A/B testing for e-commerce efforts are robust and reliable.

Step 1: Define Your Goal and Identify a Problem

Before you even think about changing an element, clearly articulate what you want to achieve. Are you aiming to increase click-through rates (CTR) on a banner, reduce cart abandonment, or boost average order value (AOV)?

- Tools: Use analytics platforms (Google Analytics, Adobe Analytics) to pinpoint areas of friction (high bounce rates, low conversion rates on specific pages, lengthy checkout times). Heatmaps and session recordings (Hotjar, Crazy Egg) can visually show where users struggle.

- Example: “Our analytics show that only 5% of visitors who reach the product page add an item to their cart. Our goal is to increase this ‘Add to Cart’ rate.”

Step 2: Formulate a Clear Hypothesis

A hypothesis is a testable statement that predicts the outcome of your experiment. It should explain why you believe a change will lead to a specific result.

- Structure: “If I change [element X] to [variation Y], then [metric Z] will increase/decrease because [reason/customer psychology].”

- Example: “If I change the ‘Add to Cart’ button text from ‘Add to Cart’ to ‘I Want This!’ on our product pages, then the ‘Add to Cart’ rate will increase because the new text conveys a stronger sense of desire and urgency.”

Step 3: Choose Your Variable(s)

For a true A/B test, you should ideally test only one variable at a time. This allows you to isolate the impact of that specific change.

- Focus: If you change the button text and color, and conversions increase, you won’t know which change was responsible.

- Exception: Multivariate testing (MVT) allows testing multiple variables simultaneously, but it requires significantly more traffic and statistical expertise. Start with A/B.

Step 4: Create Your Variations

You’ll need at least two versions:

- Control (A): The original version of the page or element.

- Variation (B): The modified version incorporating your hypothesized change.

- Ensure the variations are identical except for the single element you’re testing.

Step 5: Select Your A/B Testing Tool

Choose a platform that aligns with your technical capabilities and budget. (More on this in the next section).

- Setup: Use the tool to split your incoming traffic (e.g., 50% to Control, 50% to Variation) and track the relevant metrics (e.g., ‘Add to Cart’ clicks, conversion rate).

Step 6: Determine Sample Size and Duration

This is crucial for statistical significance. Stopping a test too early or running it with insufficient traffic can lead to false positives or negatives.

- Sample Size Calculators: Use online tools (available from most A/B testing platforms) to determine how many visitors or conversions you need based on your baseline conversion rate, desired detectable improvement, and statistical significance level (typically 95%).

- Duration: Run the test for at least one full business cycle (e.g., 1-2 weeks) to account for day-of-week variations, and long enough to reach your calculated sample size. Avoid stopping a test just because one variation appears to be winning early.

Step 7: Run the Test

Launch your experiment and let the traffic flow. Monitor for technical issues, but resist the urge to interfere with the test before it reaches statistical significance and sufficient duration.

Step 8: Analyze Results and Draw Conclusions

Once the test concludes, use your A/B testing tool’s analytics to interpret the data.

- Statistical Significance: This tells you the probability that your results are not due to random chance. Aim for 95% or higher.

- Primary Metric: Focus on your main conversion goal. Did the variation statistically outperform the control?

- Secondary Metrics: Look at other metrics (bounce rate, time on page, AOV) to understand the broader impact.

- Segmentation: Did the variation perform differently for mobile vs. desktop users, or new vs. returning visitors?

Step 9: Implement or Iterate

- If the Variation Wins: Implement the winning change permanently. Document your findings.

- If the Control Wins or No Significant Difference: Don’t view this as a failure. You’ve learned something valuable about what doesn’t work or that your hypothesis was incorrect. Document your findings, refine your hypothesis, and conduct another test.

Actionable Tip: Document Everything

Maintain a detailed log of all your A/B tests, including hypotheses, variations, duration, results, and learnings. This creates a valuable knowledge base and prevents repeating past experiments.

Essential Tools and Technologies for A/B Testing

To effectively implement A/B testing for e-commerce, you’ll need the right arsenal of tools. These platforms streamline the process from creating variations to analyzing complex data, making sophisticated experimentation accessible to businesses of all sizes.

1. Dedicated A/B Testing Platforms

These are the workhorses of the optimization world, designed specifically for running experiments on your website. They typically offer visual editors, traffic splitting, and robust analytics.

- Optimizely: A leading enterprise-grade platform known for its powerful features, server-side testing capabilities, and advanced personalization options. Ideal for larger organizations with complex testing needs.

- VWO (Visual Website Optimizer): A popular choice offering a comprehensive suite of CRO tools, including A/B testing, multivariate testing, heatmaps, session recordings, and personalization. It’s user-friendly for beginners but scalable for advanced users.

- Adobe Target: Part of the Adobe Experience Cloud, this is another enterprise solution for personalizing and optimizing experiences across multiple channels. It integrates deeply with other Adobe products.

- Google Optimize (and Alternatives): While Google Optimize was a popular free tool, it was deprecated in September 2023. Many businesses are now exploring alternatives like Optimizely Web Experimentation, VWO, AB Tasty, or utilizing native A/B testing features within their e-commerce platforms (e.g., Shopify Apps).

- Site-Specific Tools: Some e-commerce platforms (like Shopify) have apps or built-in features that allow for simple A/B testing, especially for product page elements. These can be a great starting point for smaller stores.

Key Features to Look For: Visual editor (WYSIWYG), client-side and/or server-side testing, statistical significance calculators, audience segmentation, integration with analytics.

2. Analytics Platforms

Before and after any A/B test, robust analytics are indispensable. They help you identify problem areas (where to test) and validate your results.

- Google Analytics (GA4): Essential for understanding user behavior, traffic sources, conversion funnels, and identifying pages with high drop-off rates. GA4 offers more event-driven data, which is crucial for tracking specific interactions within your tests.

- Adobe Analytics: Another powerful enterprise analytics solution, offering deep insights into customer journeys and digital experiences.

Role in A/B Testing: Identify test opportunities, establish baseline metrics, segment audiences for targeted tests, and validate the overall impact of winning variations on broader business goals.

3. User Behavior Analysis Tools (Qualitative Data)

Quantitative data (numbers from analytics and A/B tests) tells you what is happening. Qualitative data helps you understand why.

- Heatmaps (e.g., Hotjar, Crazy Egg, Microsoft Clarity): Visually show where users click, move their mouse, and scroll on a page. This can reveal ignored content, confusing layouts, or areas of interest.

- Session Recordings (e.g., Hotjar, VWO Insights, FullStory): Replay actual user sessions to see how individuals interact with your site. This can expose usability issues, broken elements, or common paths to conversion/abandonment.

- Surveys & Feedback Widgets (e.g., Hotjar, Qualaroo): Directly ask users about their experience, pain points, or suggestions. This can generate hypotheses for A/B tests.

Role in A/B Testing: Generate hypotheses, uncover pain points, and provide context for quantitative results.

4. E-commerce Platform Integrations

Many modern e-commerce platforms offer native integrations or marketplaces for A/B testing tools, simplifying deployment and data collection.

- Shopify Apps: The Shopify App Store has numerous A/B testing apps that integrate seamlessly with your store, often designed for ease of use.

- WooCommerce Extensions: Similar to Shopify, WooCommerce offers extensions that enable A/B testing functionality.

Actionable Tip: Start with Free/Freemium, Scale Up

If you’re new to A/B testing for e-commerce, start with a freemium tool or a platform with a generous trial period to get comfortable with the process. As your testing needs become more sophisticated and your budget allows, consider investing in more powerful, dedicated enterprise solutions.

Common Pitfalls and Best Practices in A/B Testing for E-commerce

While A/B testing offers immense potential, it’s not without its challenges. Avoiding common mistakes and adhering to best practices will ensure your experiments are valid, your insights are accurate, and your optimization efforts yield true gains for your e-commerce business.

Common Pitfalls to Avoid:

- Stopping Tests Too Early: This is perhaps the most frequent and damaging mistake. Stopping a test as soon as one variation pulls ahead, without reaching statistical significance or sufficient duration, often leads to false positives (Type I errors). What looks like a winner might just be random fluctuation.

- Testing Too Many Variables at Once (for A/B Tests): In a pure A/B test, changing multiple elements (e.g., headline, image, and CTA color) simultaneously makes it impossible to know which specific change caused the observed difference.

- Ignoring Statistical Significance: If your results aren’t statistically significant, there’s a high probability the observed difference is due to chance, not your variation. Implementing non-significant “winners” is akin to guessing.

- Insufficient Sample Size: Running a test with too little traffic means your results won’t be representative of your broader audience, increasing the likelihood of random noise dominating the outcome.

- Testing Insignificant Changes: While every little bit helps, spending time A/B testing minor changes (e.g., a slight shade variation of a button color) with a very high baseline conversion rate might not yield a meaningful return on your testing effort. Prioritize high-impact elements.

- Not Accounting for External Factors: Holidays, major marketing campaigns, competitor promotions, or even breaking news can skew test results if not accounted for. Ensure your test runs during a “normal” period or factor in such events during analysis.

- Not Having a Clear Hypothesis: Testing without a clear “why” is just tinkering. A hypothesis guides your experiment and helps you learn even from “losing” tests.

- Assuming All Traffic is Equal: Not segmenting your audience can hide crucial insights. Mobile users may respond differently than desktop users, or new visitors differently than returning ones.

Best Practices for Robust A/B Testing for E-commerce:

- Focus on One Primary Metric: While you’ll track many metrics, identify the single most important one for your test (e.g., ‘Add to Cart’ rate, unique purchases, conversion rate). This helps maintain focus.

- Ensure Statistical Significance: Always aim for a 95% or higher confidence level. Use A/B testing calculators to determine required sample size and duration before launching your test.

- Run Tests for Full Business Cycles: Ensure your test runs for at least one full week (to cover weekdays and weekends) and ideally longer (e.g., two weeks) to smooth out daily fluctuations and account for any weekly patterns in user behavior.

- Segment Your Results: After the test, analyze how different user segments (new vs. returning, mobile vs. desktop, specific traffic sources) responded to the variations. You might find a winner for one segment even if the overall result was inconclusive.

- Document Everything: Maintain a comprehensive log of all tests: hypothesis, design mocks, start/end dates, sample size, primary metric, results, statistical significance, and key learnings. This builds an invaluable institutional knowledge base.

- Embrace “Losing” Tests: A test where the variation doesn’t win isn’t a failure; it’s a learning opportunity. You’ve gained knowledge about what doesn’t resonate with your audience, which is equally valuable.

- Prioritize Tests Strategically: Use frameworks (like PIE: Potential, Importance, Ease) to prioritize tests. Focus on high-potential changes that are important to your business goals and relatively easy to implement.

- Continuously Iterate: Optimization is not a one-time project, but an ongoing process. A winning test often leads to new hypotheses and further improvements. Build a culture of continuous testing.

- Validate with Qualitative Data: Use heatmaps, session recordings, and user surveys in conjunction with your A/B test results. If your variation won, understanding why from qualitative data can inform future tests.

Actionable Tip: Create an Optimization Roadmap

Don’t just run tests ad-hoc. Develop a structured optimization roadmap. List potential test ideas, prioritize them, schedule them, and assign ownership. This brings discipline and long-term vision to your A/B testing efforts.

Beyond A/B Testing: Advanced Optimization Strategies

While A/B testing for e-commerce is the foundation of data-driven optimization, the landscape of conversion rate optimization (CRO) extends further. Once you’ve mastered the art of A/B testing, you can explore more sophisticated techniques to personalize and dynamically optimize your store.

Multivariate Testing (MVT)

Multivariate testing (MVT) is an advanced form of experimentation that allows you to test multiple variables simultaneously to determine which combination of elements performs best.

- How it Works: Instead of just comparing A vs. B, MVT tests all possible combinations of multiple changes on a single page. For example, testing three headlines and two images on a product page would result in 3 x 2 = 6 different variations.

- When to Use It: MVT is ideal when you have several elements on a page that you suspect interact with each other, and you want to find the optimal combination. It requires significantly more traffic than A/B testing to achieve statistical significance due to the larger number of variations.

- Benefit: MVT can uncover synergies between elements that individual A/B tests might miss, providing a more holistic understanding of page optimization.

Personalization

Personalization takes optimization a step further by tailoring content, offers, and experiences to individual users based on their behavior, demographics, and preferences.

- Dynamic Content: Displaying different hero banners, product recommendations, or promotions based on a user’s browsing history, location, or past purchases. For example, showing winter gear to visitors from cold climates or cross-selling accessories based on items in their cart.

- AI-Driven Recommendations: Utilizing machine learning algorithms to suggest products that are highly relevant to each user, leading to increased average order value and repeat purchases.

- Targeted Messaging: Adjusting copy and calls-to-action to reflect a user’s stage in the buying journey or their specific interests (e.g., offering a first-time discount to new visitors vs. a loyalty reward to returning customers).

- Benefit: Personalization creates a more relevant and engaging shopping experience, mimicking the tailored service of a physical store. This can significantly boost engagement, conversions, and customer loyalty.

AI-Driven Optimization

The latest frontier in optimization leverages artificial intelligence and machine learning to automate and enhance the testing process.

- Automated Experimentation: AI algorithms can dynamically adjust traffic distribution to variations that are performing better, ensuring that more users see the winning experience faster.

- Predictive Analytics: AI can analyze vast datasets to predict which changes are most likely to succeed, helping you generate more effective hypotheses.

- Automated Content Generation: Some AI tools can even generate different variations of headlines, product descriptions, or ad copy, speeding up the creation of testable elements.

- Benefit: AI-driven optimization reduces the manual effort involved in testing, accelerates learning, and can achieve higher conversion rates by continuously adapting to user behavior in real-time.

Actionable Tip: Master A/B Testing First

While MVT, personalization, and AI-driven optimization offer powerful capabilities, they build upon the fundamental principles of A/B testing. Before diving into these advanced strategies, ensure your team has a solid grasp of A/B testing methodology, statistical significance, and data interpretation. A strong A/B testing foundation will make your transition to more complex optimization techniques much smoother and more effective.

Conclusion

In the dynamic world of e-commerce, standing still is akin to moving backward. The ability to continually adapt, improve, and refine your online store based on tangible customer behavior is not just an advantage—it’s a necessity. A/B testing for ecommerce provides the scientific framework to achieve this, transforming guesswork into data-backed decisions that drive real, measurable growth.

From optimizing critical product pages and streamlining your checkout flow to enhancing site-wide elements and understanding the subtle nuances of customer psychology, A/B testing empowers you to unlock higher conversion rates, boost average order value, and cultivate a superior user experience. By embracing a systematic approach—defining clear goals, formulating robust hypotheses, running statistically significant tests, and learning from every outcome—you can build a resilient and highly optimized e-commerce operation.

Remember, the journey of optimization is continuous. It’s about constant iteration, perpetual learning, and an unwavering commitment to understanding what truly resonates with your audience. Start small, celebrate every win (and learn from every loss), and consistently apply the principles outlined in this guide. The insights gained from A/B testing are not just about making more sales today; they’re about building a stronger, more customer-centric, and more profitable e-commerce business for tomorrow.

Your Next Step: Don’t delay your optimization journey. Pick one high-impact area on your e-commerce store, formulate your first hypothesis, and launch your initial A/B test. The sooner you start, the sooner you’ll begin to uncover the hidden potential within your online business. Happy testing!

About the Author: Alex Chen

Alex Chen is a seasoned e-commerce strategist and conversion rate optimization (CRO) expert with over a decade of experience helping online businesses thrive. Specializing in data-driven growth, Alex empowers brands to unlock their full potential through meticulous A/B testing, user experience enhancements, and advanced analytics. Connect with Alex on LinkedIn.

Frequently Asked Questions